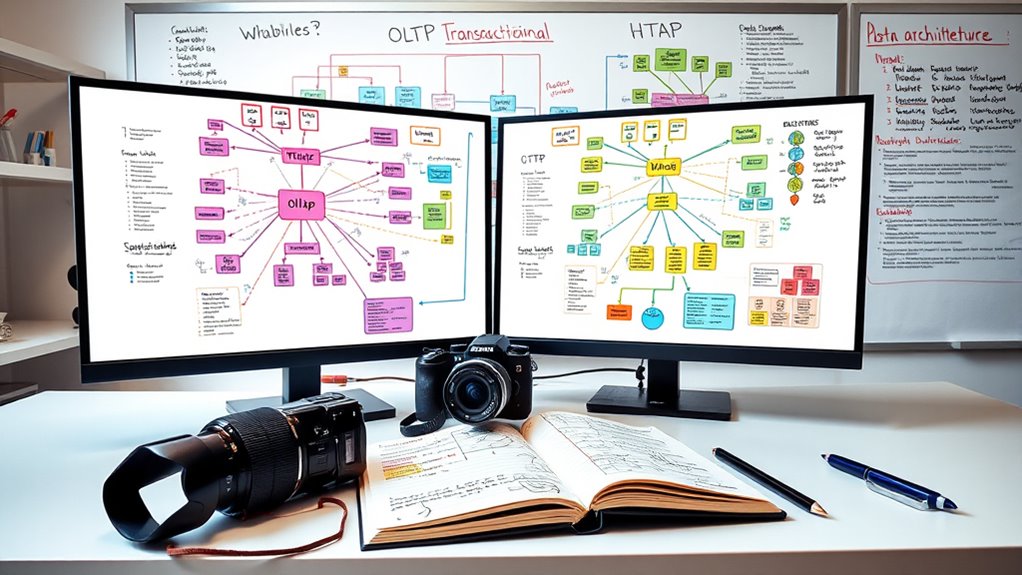

Choosing the right database pattern depends on your specific needs. If you require fast, reliable transactions like orders or banking, OLTP is best. For large-scale data analysis and deep insights, OLAP handles complex queries efficiently. If you need both in real-time, HTAP combines transactional and analytical workloads. Understanding these differences helps you optimize performance and consistency. Keep exploring to find out which approach fits your business goals best.

Key Takeaways

- OLTP is ideal for high-speed, transactional operations requiring immediate consistency and data integrity.

- OLAP suits complex, read-heavy analytical queries over large datasets for strategic insights.

- HTAP combines transactional and analytical processing to enable real-time insights with balanced performance.

- Choose OLTP for fast, reliable transactions; OLAP for deep data analysis; HTAP for real-time transactional and analytical needs.

- Consider workload type, data freshness, latency requirements, and system complexity when selecting the appropriate pattern.

Database Management Systems: A practical approach

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Understanding OLTP: The World of Transactional Databases

Transactional databases are the backbone of many modern applications, handling high-volume, short-lived operations that require immediate consistency. You rely on them for tasks like processing banking transactions, online shopping carts, and user account updates. They’re optimized for quick, small changes—such as inserts, updates, and deletes—ensuring data stays accurate and reliable. These systems follow strict ACID principles, which guarantee data integrity even during failures or concurrent operations. You’ll notice they use normalized schemas and row-oriented storage to support fast point lookups and efficient updates. Response times are measured in milliseconds, even under heavy load, thanks to specialized indexing and concurrency controls. Their main goal is to deliver consistent, durable data quickly, making them essential for any environment where data integrity and speed matter.

Data Warehouse Systems: Design and Implementation (Data-Centric Systems and Applications)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Exploring OLAP: The Engine of Data Analysis and Business Intelligence

Have you ever wondered how large organizations turn vast amounts of data into actionable insights? That’s where OLAP comes in. It’s designed for complex, read-heavy analytical queries over huge datasets. Unlike OLTP, which handles everyday transactions, OLAP focuses on aggregations, multi-dimensional analysis, and long-running scans. You’ll find OLAP systems often use denormalized star or snowflake schemas with columnar storage, optimizing for speed and compression. They support large-scale data warehouses, enabling you to analyze historical trends, perform complex joins, and generate reports. OLAP systems deliver high throughput for large scans and aggregations, often across distributed nodes. They’re essential for business intelligence, data mining, and strategic decision-making, helping you to extract meaningful insights from your data rather than just managing transactions. Additionally, OLAP leverages European cloud infrastructure to ensure data sovereignty and compliance with regional regulations. The use of optimized storage techniques further enhances query performance, making OLAP a powerful tool for comprehensive data analysis. Moreover, understanding database patterns can help in designing more efficient data architectures that meet specific analytical needs.

Database Systems: Introduction to Databases and Data Warehouses, Edition 2.0

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What Is HTAP? Combining Transactional and Analytical Workloads

HTAP, or Hybrid Transactional/Analytical Processing, integrates both transactional and analytical workloads within a single system, enabling real-time data processing and insights. This setup allows you to run high-speed transactions while simultaneously performing complex analytics without data movement delays. You can respond quickly to business needs, detect fraud, or update dashboards instantly. However, balancing OLTP and OLAP workloads can be challenging, risking system contention or degraded performance if not managed carefully.

| Exciting Possibilities | Potential Challenges |

|---|---|

| Real-time decision making | Performance trade-offs |

| Faster insights on fresh data | System complexity |

| Unified data architecture | Resource contention |

| Reduced ETL delays | Managing workload interference |

| Streamlined operations | Ensuring data consistency |

MASTERING SINGLESTORE DATABASE: DISTRIBUTED SQL AT SCALE: Deploy, optimize, and scale hybrid transactional/analytical workloads with columnstore, rowstore, and cloud-native architecture

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

How Data Models and Storage Methods Differ Across Patterns

Different data models and storage approaches are tailored to optimize the unique access patterns and performance requirements of OLTP, OLAP, and HTAP systems. OLTP systems use normalized, row-oriented storage to support fast, small transactions and maintain data integrity. Their schemas are designed for efficient updates and minimal redundancy. OLAP systems, however, favor denormalized star or snowflake schemas with columnar storage, which accelerate large scans, aggregations, and compression. HTAP systems often combine both storage types—using dual-store patterns like row stores for transactional workloads and column stores for analytical tasks. These approaches enable each workload to perform best, reducing contention and improving overall system efficiency. The choice of data model and storage directly influences query speed, scalability, and system complexity across different patterns. Data modeling techniques are essential for optimizing system performance and ensuring data consistency across diverse workloads.

Performance and Latency Expectations for Each Approach

Each database approach sets distinct expectations for performance and latency. OLTP systems target millisecond response times, handling thousands of quick transactions simultaneously, thanks to optimized indexes and normalized schemas. OLAP systems prioritize throughput for large-scale scans and aggregations, with query durations ranging from seconds to minutes, supported by columnar storage and pre-aggregated data. HTAP aims to balance both, offering sub-second responses for transactional queries while supporting analytical workloads, but performance may vary based on resource allocation and workload separation. Heavy analytical queries on transactional systems can cause contention, increasing latency and risking SLA violations. To manage this, organizations often physically separate OLTP and OLAP or implement resource controls. Ultimately, each approach’s performance expectations are shaped by workload type, system design, and operational priorities. Also, understanding the performance characteristics of each system helps in setting realistic expectations and designing suitable infrastructure.

Ensuring Data Consistency and Durability in Different Systems

You need to understand how each system guarantees data consistency and durability to meet your application’s requirements. OLTP systems prioritize strict transactional guarantees, while OLAP systems often accept eventual consistency for faster analysis. Balancing these trade-offs is vital to make certain your data remains accurate and reliable across different workloads.

Transactional Data Guarantees

Ensuring data consistency and durability is fundamental to maintaining trust in transactional systems. In OLTP, you rely on strict ACID guarantees—atomicity, consistency, isolation, and durability—to keep data accurate and safe. These systems use transaction logs and write-ahead logging (WAL) to prevent data loss, even during failures. Transaction logs and WAL are critical components that ensure data recovery and integrity. In contrast, OLAP systems prioritize reproducibility and correctness of large analytical results, often accepting eventual consistency. HTAP combines both worlds, balancing immediate transaction guarantees with analytical stability. Additionally, understanding Free Floating concepts can help optimize the integration of transactional and analytical workloads for better system performance.

Analytical Data Stability

Maintaining data stability in analytical systems requires careful handling of consistency and durability, especially since these systems often process large volumes of historical data. You need to guarantee that data remains accurate and available over time, even during failures or updates. To achieve this, consider:

- Using immutable storage formats like columnar files and checkpoints for reliable snapshots

- Implementing periodic data refreshes to balance freshness and stability

- Employing replication and backup strategies to prevent data loss

- Managing versioning and reconciliation processes for consistent analytical results

Balancing these approaches helps prevent data corruption, guarantees reproducibility of reports, and maintains trust in your analytics. Properly designed durability and stability mechanisms are essential for accurate decision-making and long-term data integrity.

Consistency Models Trade-offs

Different database systems adopt various consistency models to balance data accuracy, availability, and performance. In OLTP systems, strict ACID guarantees ensure data correctness, with synchronous commits and transaction logs maintaining durability. These systems prioritize consistency over availability during failures. OLAP systems often relax consistency to achieve higher throughput, favoring eventual consistency for analytical accuracy and reproducibility of reports. HTAP systems try to balance both, offering configurable consistency levels—either strong or eventual—depending on workload needs. This flexibility helps optimize real-time analytics without compromising transactional integrity. However, trade-offs exist: stronger consistency can cause latency and reduce concurrency, while weaker models improve performance but risk data anomalies. Understanding these trade-offs helps you choose the right system based on your application’s requirements for accuracy, speed, and reliability.

Choosing the Right Pattern Based on Your Business Needs

Choosing the right database pattern depends on your specific business priorities and operational requirements. If your focus is high-volume, low-latency transactions like banking or e-commerce, opt for OLTP. For analytics, large-scale reporting, or historical data analysis, OLAP is ideal. If you need real-time insights on live transactional data without sacrificing performance, HTAP offers a balanced approach.

Consider these factors:

- Data freshness requirements and latency tolerances

- Volume and complexity of analytical queries

- Need for strict transactional consistency versus eventual consistency

- Operational complexity and cost of managing multiple systems versus a unified architecture

Align your choice with your core goals—whether transactional speed, analytical depth, or real-time insights—to select the most suitable database pattern for your business.

Frequently Asked Questions

How Do HTAP Systems Prevent OLTP and OLAP Resource Conflicts?

You prevent OLTP and OLAP resource conflicts in HTAP systems by using resource isolation techniques like dedicated compute paths, separate storage layers, or workload-specific scheduling. These systems often implement workload management tools that allocate resources dynamically, ensuring heavy analytical queries don’t interfere with transactional operations. Additionally, some HTAP architectures run OLTP and OLAP workloads on separate nodes or in separate processes, maintaining performance and consistency for both.

Can a Single System Effectively Support Both OLTP and OLAP Workloads?

Yes, a single system can support both OLTP and OLAP workloads, but it’s a balancing act. By blending benchmarks, you build a balanced backbone that handles both quick transactions and complex queries. However, you must manage resource rivalry, reduce risk, and refine responsiveness. Hybrid architectures often use dual stores or layered logic, enabling you to enjoy efficiency without sacrificing speed or analytical accuracy.

What Are the Main Trade-Offs When Choosing HTAP Over Separate Systems?

You weigh the benefits of HTAP against separate systems by considering trade-offs. HTAP simplifies architecture, reduces data duplication, and enables real-time analytics, but it introduces complexity in balancing workloads and resource contention. Separate systems offer optimized performance and strict SLAs for each workload but increase operational overhead, data latency, and integration challenges. Ultimately, you must decide if the convenience of a unified system outweighs the performance and scalability benefits of separation.

How Does Data Freshness Impact Decision-Making in HTAP Architectures?

Imagine you’re living in the 21st century, where data freshness is like the telegraph—crucial for quick decisions. In HTAP architectures, data freshness directly influences your choices, enabling real-time analytics on transactional data. If you need sub-second insights to prevent fraud or optimize operations, prioritizing fresh data is essential. Conversely, less immediate needs might tolerate slight delays, allowing you to focus on consistency and system stability.

What Factors Influence the Cost and Complexity of Implementing HTAP Solutions?

You should consider factors like system complexity, data architecture, and resource management. Implementing HTAP requires integrating or separating storage and compute layers, which increases design and maintenance efforts. Balancing transactional and analytical workloads demands sophisticated resource scheduling, leading to higher costs. Additionally, ensuring data consistency, managing backups, and monitoring performance adds to operational complexity. These elements collectively influence the overall cost and difficulty of deploying effective HTAP solutions.

Conclusion

By exploring OLTP, OLAP, and HTAP, you can gently find the perfect balance for your data needs. Each pattern offers its own unique charm, whether it’s swift transactions or insightful analysis. With a little patience and understanding, you’ll discover the best fit for your business, ensuring smooth sailing ahead. Embrace the journey of choosing the right database pattern, and watch your data work harmoniously to support your goals.